On the fence

Many of my teammates often hear me say that the technology part of being a technology consultant is actually the easy one. Machines know how to do their thing, especially when we tell them what to do directly and inambiguosly — it is always humanity that makes our lives interesting and, at times, challenging. Psychology, sociology, or anthropology are often as important as the technical correctness of a design.

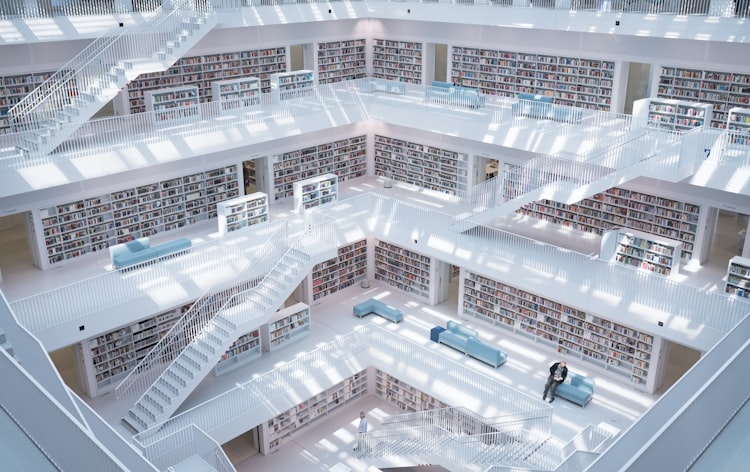

This is why it is important for practitioners of technology architecture to always mind Chesterton's Fence. Farnam Street has a masterful explainer that I would encourage you to go over, but it boils down to one basic idea. Simply put,

In a way, it is like an architect's Prime Directive or Hippocratic Oath. It does not matter how rigorous or beautifully astute our solution is. If we barge in with big assumptions and good intentions in order to reach a desired state, but we lack of awareness of the current context, our solution will not succeed. IT does not mean "Ivory Tower".

Here is a similar take from a different angle. Marshall McLuhan, philosopher specialized in communication theory, who taught us that "the medium is the message" and coined the term "global village" suggests that

By the way, the quote is from his book The Medium is the Massage. (No, it is not a typo.)

As we are building and designing human systems we are bound to find existing elements of any type (organizational units, applications, processes, job titles, data loads) that look completely wrong, either by their own demerits, or in connection to other elements in the landscape. These elements are fences, in the Chesterton sense. Our first impulse is to send these misfits to the chopping block. Sometimes because of merciful and, allegedly, self-evident common sense; sometimes due to our own hubris, as we perceive them as standing in the way of The Big Design. McLuhan echoes Chesterton and tells us that active, deliberate observation (the "willingness to contemplate") is key in dealing with these fences. Before reaching the foregone conclusion (the "inevitability") that the fence must go, we must understand its role as part of a larger human ecosystem.

For those of us dabbling in code and programmatic development, this is very similar to our approach to refactoring when we are practicing test-driven development. Before touching a single line of code of the service being refactored we must build a series of tests that describes its behavior, so that we can quickly detect any changes that may impact other services in the system. Only after we have that insurance policy can we start modifying the service. If doing it for something so bluntly deterministic as code seems to be common sense, why should we not do the same with broader, more ambiguously behaved systems?

Member discussion